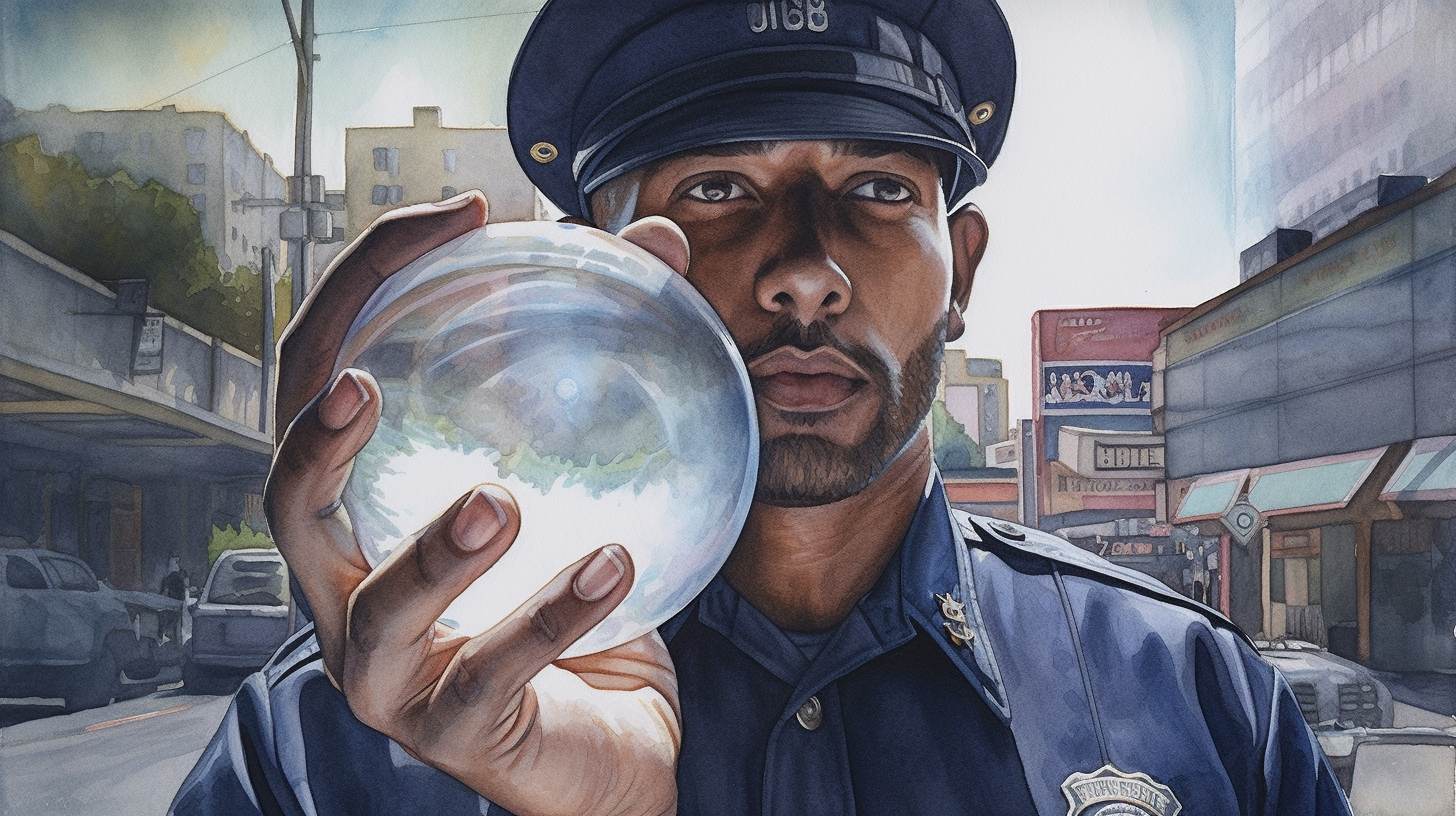

Tzu-Wei Hung and Chun-Ping Yen contribute to the discursive field of predictive policing algorithms (PPAs) and their intersection with structural discrimination. They examine the functioning of PPAs, and lay bare their potential for propagating existing biases in policing practices and thereby question the presumed neutrality of technological interventions in law enforcement. Their investigation underscores the technological manifestation of structural injustices, adding a critical dimension to our understanding of the relationship between modern predictive technologies and societal equity.

An essential aspect of the authors’ argument is the proposition that the root of the problem lies not in the predictive algorithms themselves, but in the biased actions and unjust social structures that shape their application. Their article places this contention within the broader philosophical context, emphasizing the often-overlooked social and political underpinnings of technological systems. Thus, it offers a pertinent contribution to futures studies, prompting a more nuanced understanding of the interplay between (hotly anticipated) advanced technologies like PPAs and the structural realities of societal injustice. The authors provide a robust challenge to deterministic narratives around technology, pointing to the integral role of societal context in determining the impact of predictive policing systems.

Conceptualizing Predictive Policing

Hung and Yen Scrutinize the correlation between data inputs, algorithmic design, and resultant predictions. Their analysis disrupts the popular conception of PPAs as inherently objective and unproblematic, instead illuminating the mechanisms by which structural biases can be inadvertently incorporated and perpetuated through these algorithmic systems. The article’s critical scrutiny of PPAs further elucidates the relational dynamics between data, predictive modeling, and the societal contexts in which they are deployed.

The authors advance the argument that the implications of PPAs extend beyond individual acts of discrimination to reinforce broader systems of structural bias and social injustice. By focusing on the role of PPAs in reproducing existing patterns of discrimination, they elevate the discussion beyond a simplistic focus on technological neutrality or objectivity, situating PPAs within a larger discourse on technological complicity in the perpetuation of social injustices. This perspective fundamentally challenges conventional thinking about PPAs, prompting a shift from an algorithm-centric view to one that acknowledges the socio-political realities that shape and are shaped by these technological systems.

Structural Discrimination, Predictive Policing, and Theoretical Frameworks

The study goes further in its analysis by arguing that discrimination perpetuated through PPAs is, in essence, a manifestation of broader structural discrimination within societal systems. This perspective illuminates the connections between predictive policing and systemic power imbalances, rendering visible the complex ways in which PPAs can reify and intensify existing social injustices. The authors critically underline the potentially negative impact of stakeholder involvement in predictive policing, postulating that equal participation may unintentionally replicate or amplify pre-existing injustices. The analysis posits that the sources of discrimination lie in biased police actions reflecting broader societal inequities rather than the algorithmic systems themselves. Hence, addressing these challenges necessitates a focus not merely on rectifying algorithmic anomalies, but on transforming the unjust structures that they echo.

The authors propose a transformative theoretical framework, referred to as the social safety net schema, which envisions PPAs as integrated within a broader social safety net. This schema reframes the purpose and functioning of PPAs, advocating for their use not to penalize but to predict social vulnerabilities and facilitate requisite assistance. This is a paradigm shift from crime-focused approaches to a welfare-oriented model that situates crime within socio-economic structures. In this schema, the role of predictive policing is reimagined, with crime predictions used as indicators of systemic inequities that necessitate targeted interventions and redistribution of resources. With this reorientation, predictive policing becomes a tool for unveiling societal disparities and assisting in welfare improvement. The implementation of this schema implies a commitment to equity rather than just equality, addressing the nuances and complexities of social realities and aiming at the underlying structures fostering discrimination.

Community and Stakeholder Involvement, and Implications for Future Research

The issue of stakeholder involvement is addressed with both depth and nuance. Acknowledging the criticality of involving diverse stakeholders in the governance and control of predictive policing technology, the authors assert that equal participation could inadvertently reproduce the extant societal disparities. In their view, a stronger representation of underrepresented groups in decision-making processes is vital. This necessitates more resources and mechanisms to ensure their voices are heard and acknowledged in shaping public policies and social structures. The role of local communities in this process is paramount; they act as informed advocates, ensuring the proper understanding and representation of disadvantaged groups. This framework, hence, pivots on a bottom-up approach to power and control over policing, ensuring democratic community control and fostering collective efficacy. The approach is envisioned to counterbalance the persisting inequality, thereby reducing the likelihood of discrimination and improving community control over policing.

The analysis brings forth notable implications for future academic inquiries and policy-making. It endorses the importance of scrutiny of social structures rather than the predictive algorithms themselves as the catalyst for discriminatory practices in predictive policing. This view drives the necessity of further research into the multifaceted intersection between social structures, law enforcement, and advanced predictive technologies. Moreover, it prompts consideration of how policies can be implemented to reflect this understanding, centering on creating a socially aware and equitable technological governance structure. The policy schema of the social safety net for predictive policing, as proposed by the authors, offers a starting point for such a discourse. Future research may focus on implementing and testing this schema, critically examining its effectiveness in mitigating discriminatory impacts of predictive policing, and identifying potential adjustments necessary for enhancing its efficiency and inclusivity. In essence, future inquiries and policy revisions should foster a context-sensitive, democratic, and community-focused approach to predictive policing.

Abstract

This paper examines racial discrimination and algorithmic bias in predictive policing algorithms (PPAs), an emerging technology designed to predict threats and suggest solutions in law enforcement. We first describe what discrimination is in a case study of Chicago’s PPA. We then explain their causes with Broadbent’s contrastive model of causation and causal diagrams. Based on the cognitive science literature, we also explain why fairness is not an objective truth discoverable in laboratories but has context-sensitive social meanings that need to be negotiated through democratic processes. With the above analysis, we next predict why some recommendations given in the bias reduction literature are not as effective as expected. Unlike the cliché highlighting equal participation for all stakeholders in predictive policing, we emphasize power structures to avoid hermeneutical lacunae. Finally, we aim to control PPA discrimination by proposing a governance solution—a framework of a social safety net.

Predictive policing and algorithmic fairness